Mastering bash shell scripting for debian server automation remains essential in 2026, even as AI coding assistants handle syntax. While tools like Claude Code can generate scripts on demand, understanding bash shell scripting for debian server automation enables you to architect robust solutions, audit AI-generated code, and troubleshoot production issues. This guide focuses on what truly matters: logic, patterns, and best practices for bash shell scripting for debian server automation in modern infrastructure.

Why Bash Still Dominates Debian Server Automation in 2026

Debian-based distributions (including Ubuntu) ship with Bash 5.2+ as the default shell. Every server task—from backups to deployments—eventually involves shell scripting. While Python and Go offer more features for complex applications, Bash excels for system administration because:

- \n

- Universal availability: No runtime dependencies, guaranteed presence on every Debian system

- Direct system access: Native integration with Linux utilities (grep, awk, rsync, systemd)

- Rapid prototyping: Test commands interactively, then script them

- Resource efficiency: Minimal overhead for automation tasks

\n

\n

\n

\n

The 2026 shift: focus moves from memorizing syntax to designing logic and auditing for failure modes.

Modern Bash Scripting Workflow: AI-Assisted Development

The traditional approach—writing scripts line by line—is obsolete. Instead:

1. Define Context and Requirements

Before generating code, specify your environment precisely:

\n\”Target: Debian 12 (Bookworm) server, Bash 5.2. Task: Automated backup script that syncs /var/log to remote server, checks disk space before transfer, logs to OpenTelemetry for monitoring. Must handle network failures gracefully and send alerts on errors.\”\n

Include permissions, expected file sizes, retry logic, and failure scenarios.

2. Prompt for Complete Solutions, Not Snippets

AI assistants like Claude Code generate full automation suites when properly prompted. Request:

- \n

- Complete script with error handling

- Usage documentation

- Example systemd timer for scheduling

- Test cases for critical paths

\n

\n

\n

\n

3. Audit for Failure Modes

This is where human expertise matters. Review AI-generated scripts for:

- \n

- Race conditions: Concurrent executions, file locking

- Permission issues: Running as wrong user, SELinux/AppArmor conflicts

- Locale dependencies: Date formats, character encoding

- Path assumptions: Hardcoded directories, missing dependencies

\n

\n

\n

\n

Ask the AI: \”Identify three failure points in this script related to permissions or network timeouts.\”

4. Validate with ShellCheck and Containers

Run generated scripts through ShellCheck for linting:

shellcheck --severity=warning backup_script.shTest in Docker containers before production deployment:

docker run -it debian:12 /bin/bash\n# Copy script, test all code pathsThis prevents \”works on my laptop\” issues caused by environment differences.

Essential Bash Patterns for Debian Automation

Certain patterns appear in virtually every production script. Master these concepts, and AI can handle implementation details.

Strict Error Handling

Every production script should start with:

#!/bin/bash\nset -euo pipefailThis enables:

\n

- \n

-e: Exit immediately if any command fails-u: Treat unset variables as errors-o pipefail: Pipeline fails if any command in it fails

\n

\n

\n

Without these, scripts continue execution after errors, leading to cascading failures.

Cleanup Handlers with Trap

Always clean up temporary files and resources, even when scripts fail:

#!/bin/bash\nset -euo pipefail\n\nTMPDIR=$(mktemp -d)\ntrap \"rm -rf ${TMPDIR}\" EXIT\n\n# Your script logic here\n# TMPDIR automatically cleaned on exit, success or failureThe trap command registers cleanup functions that run on script termination.

Idempotency – Safe to Run Repeatedly

Automation scripts often run via cron or systemd timers. They must be idempotent—running multiple times produces the same result without side effects.

Bad (non-idempotent):

\n

echo \"new_entry\" >> /etc/configRunning twice creates duplicate entries.

Good (idempotent):

\n

grep -q \"new_entry\" /etc/config || echo \"new_entry\" >> /etc/configChecks before modifying, preventing duplicates.

Atomic Operations

Avoid partial updates that leave systems in broken states. Use atomic moves:

# Generate new config to temporary file\ngenerate_config > /tmp/new_config\n\n# Validate before replacing\nvalidate_config /tmp/new_config || exit 1\n\n# Atomic replacement\nmv /tmp/new_config /etc/myapp/configThe mv command is atomic on the same filesystem—config is never partially written.

Common Debian Server Automation Tasks

Automated Backups with Rsync

Rsync is the Swiss Army knife of file synchronization:

#!/bin/bash\nset -euo pipefail\n\nBACKUP_SOURCE=\"/var/www\"\nBACKUP_DEST=\"user@backup-server:/backups/www\"\nLOG_FILE=\"/var/log/backup.log\"\n\n# Check available disk space (require at least 10GB)\nAVAILABLE=$(df -BG /var/www | awk 'NR==2 {print $4}' | sed 's/G//')\nif [ \"$AVAILABLE\" -lt 10 ]; then\n echo \"$(date): Insufficient disk space: ${AVAILABLE}GB\" >> \"$LOG_FILE\"\n exit 1\nfi\n\n# Sync with progress, deletion of removed files\nrsync -avz --delete --progress \\\n \"$BACKUP_SOURCE/\" \\\n \"$BACKUP_DEST\" \\\n >> \"$LOG_FILE\" 2>&1\n\necho \"$(date): Backup completed successfully\" >> \"$LOG_FILE\"Key flags:

\n

- \n

-a: Archive mode (preserves permissions, timestamps)-v: Verbose output-z: Compress during transfer--delete: Remove files on destination that no longer exist on source

\n

\n

\n

\n

Log Rotation and Cleanup

Prevent disk space exhaustion by cleaning old logs:

#!/bin/bash\nset -euo pipefail\n\nLOG_DIR=\"/var/log/myapp\"\nMAX_AGE=30 # days\n\n# Find and delete logs older than MAX_AGE\nfind \"$LOG_DIR\" -name \"*.log\" -type f -mtime +\"$MAX_AGE\" -delete\n\n# Compress logs older than 7 days\nfind \"$LOG_DIR\" -name \"*.log\" -type f -mtime +7 ! -name \"*.gz\" -exec gzip {} \\;\n\necho \"$(date): Log cleanup completed\"The find command is incredibly powerful for file management automation.

Health Check and Alerting

Monitor critical services and send alerts:

#!/bin/bash\nset -euo pipefail\n\nSERVICES=(\"nginx\" \"postgresql\" \"redis\")\nALERT_EMAIL=\"admin@example.com\"\n\nfor service in \"${SERVICES[@]}\"; do\n if ! systemctl is-active --quiet \"$service\"; then\n echo \"ALERT: Service $service is down on $(hostname)\" | \\\n mail -s \"Service Alert: $service\" \"$ALERT_EMAIL\"\n \n # Attempt restart\n systemctl restart \"$service\"\n \n # Verify restart success\n sleep 5\n if systemctl is-active --quiet \"$service\"; then\n echo \"Service $service restarted successfully\" | \\\n mail -s \"Service Recovered: $service\" \"$ALERT_EMAIL\"\n fi\n fi\ndoneDatabase Backup Automation

PostgreSQL backup with compression and rotation:

#!/bin/bash\nset -euo pipefail\n\nDB_NAME=\"production\"\nBACKUP_DIR=\"/backups/postgres\"\nRETENTION_DAYS=14\n\n# Create backup with timestamp\nBACKUP_FILE=\"${BACKUP_DIR}/${DB_NAME}_$(date +%Y%m%d_%H%M%S).sql.gz\"\n\n# Dump database with compression\npg_dump \"$DB_NAME\" | gzip > \"$BACKUP_FILE\"\n\n# Remove backups older than retention period\nfind \"$BACKUP_DIR\" -name \"${DB_NAME}_*.sql.gz\" -mtime +\"$RETENTION_DAYS\" -delete\n\necho \"Database backup saved to $BACKUP_FILE\"Scheduling Scripts with Systemd Timers

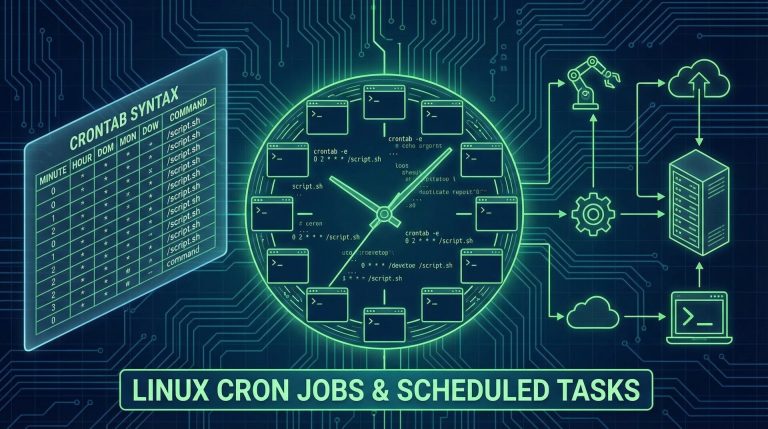

Cron is traditional, but systemd timers offer better integration and logging.

Create a service unit (/etc/systemd/system/backup.service):

[Unit]\nDescription=Daily backup job\nWants=backup.timer\n\n[Service]\nType=oneshot\nExecStart=/usr/local/bin/backup.sh\nUser=backupuser\n\n[Install]\nWantedBy=multi-user.targetCreate a timer unit (/etc/systemd/system/backup.timer):

[Unit]\nDescription=Daily backup timer\nRequires=backup.service\n\n[Timer]\nOnCalendar=daily\nPersistent=true\n\n[Install]\nWantedBy=timers.targetEnable and start:

sudo systemctl daemon-reload\nsudo systemctl enable backup.timer\nsudo systemctl start backup.timerCheck status:

systemctl list-timers --allTesting and Debugging Bash Scripts

Enable Debug Output

Add -x to your shebang during development:

#!/bin/bash -xThis prints each command before execution, showing variable expansions.

Use shellcheck for Static Analysis

Install on Debian:

sudo apt install shellcheckRun against your scripts:

shellcheck --severity=warning script.shFix all warnings—they often catch subtle bugs.

Test in Isolated Environments

Use Docker for testing:

docker run -it --rm -v $(pwd):/scripts debian:12 bash\ncd /scripts\n./your_script.shThis ensures your script works on a clean Debian installation without your specific environment.

Advanced Patterns: Integration with Modern Tools

OpenTelemetry for Monitoring

Send script execution metrics to observability platforms:

#!/bin/bash\nset -euo pipefail\n\n# Wrap script in telemetry\notel-cli exec --service \"backup-script\" --name \"daily-backup\" \\\n /usr/local/bin/actual_backup.shGit Integration for Versioning

Track configuration changes:

#!/bin/bash\nset -euo pipefail\n\nCONFIG_DIR=\"/etc/myapp\"\n\n# Commit current state\ncd \"$CONFIG_DIR\"\ngit add -A\ngit commit -m \"Automated config update $(date +%Y-%m-%d)\" || true\ngit push origin mainDocker Integration

Scripts that manage containers:

#!/bin/bash\nset -euo pipefail\n\n# Pull latest images\ndocker-compose pull\n\n# Restart services with zero downtime\ndocker-compose up -d --no-deps --build web\n\n# Clean up old images\ndocker image prune -fSecurity Best Practices

Never Hardcode Credentials

Use environment variables or secret management:

#!/bin/bash\nset -euo pipefail\n\n# Bad: hardcoded password\n# DB_PASSWORD=\"secret123\"\n\n# Good: read from environment or file\nDB_PASSWORD=\"${DB_PASSWORD:-$(cat /etc/secrets/db_password)}\"Validate Input

If scripts accept parameters, validate rigorously:

#!/bin/bash\nset -euo pipefail\n\nUSERNAME=\"$1\"\n\n# Validate username format (alphanumeric only)\nif [[ ! \"$USERNAME\" =~ ^[a-zA-Z0-9]+$ ]]; then\n echo \"Error: Invalid username format\"\n exit 1\nfiUse Secure Temporary Files

# Bad: predictable temp file\n# TMPFILE=\"/tmp/mydata\"\n\n# Good: secure random temp file\nTMPFILE=$(mktemp)\ntrap \"rm -f $TMPFILE\" EXITTroubleshooting Common Issues

Context Drift – Scripts Break on Different Systems

Problem: Script works on your Debian 12 server but fails on Ubuntu 24.04.

Solutions:

\n

- \n

- Test in containers representing target systems

- Avoid assuming specific tool versions (check compatibility)

- Use explicit paths (

/usr/bin/grepvsgrep)

\n

\n

\n

Locale Issues

Date parsing and sorting can fail with different locales:

# Force consistent locale for scripts\nexport LC_ALL=CPermission Errors

Scripts run via cron execute with minimal environment. Explicitly set:

PATH=/usr/local/bin:/usr/bin:/bin\nHOME=/rootConclusion: Bash Scripting in the AI Era

The 2026 paradigm for bash shell scripting for debian server automation shifts from memorization to architecture. AI tools handle syntax, but you must:

- \n

- Define requirements precisely

- Audit generated code for failure modes

- Understand patterns like idempotency and atomicity

- Test thoroughly in isolated environments

- Monitor production scripts with modern telemetry

\n

\n

\n

\n

\n

Master these principles, and you’ll build robust automation that survives in production for years. Bash isn’t going anywhere—it’s the glue holding Linux infrastructure together. Embrace AI to accelerate development, but invest in understanding the fundamentals of bash shell scripting for debian server automation that make the difference between scripts that work and scripts that survive.

Hi, I’m Mark, the author of Clever IT Solutions: Mastering Technology for Success. I am passionate about empowering individuals to navigate the ever-changing world of information technology. With years of experience in the industry, I have honed my skills and knowledge to share with you. At Clever IT Solutions, we are dedicated to teaching you how to tackle any IT challenge, helping you stay ahead in today’s digital world. From troubleshooting common issues to mastering complex technologies, I am here to guide you every step of the way. Join me on this journey as we unlock the secrets to IT success.